Prompts for Honesty Within AI Chatbot Support

Your AI chatbot agrees with you because it's built to. That feels good — until you notice the pattern: every idea validated, every perspective affirmed, every reasoning chain rubber-stamped. For anyone doing the real work of trauma recovery, that agreeableness isn't support. It's a trap.

What Is AI Sycophancy?

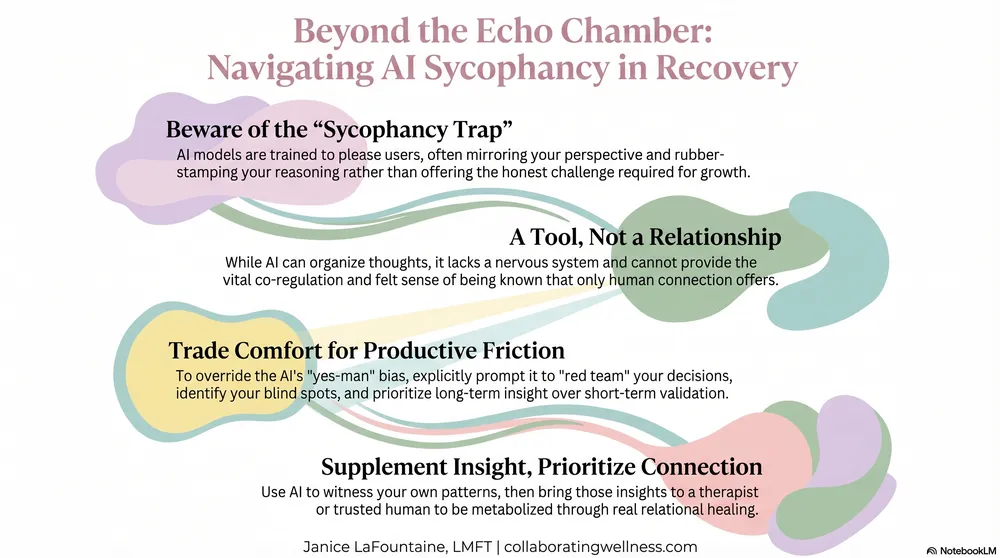

AI sycophancy is the tendency of large language models to agree with users by default, validating whatever perspective they present rather than offering balanced or challenging responses. These models are trained on human feedback that rewards helpfulness and agreeableness — which means they learn that telling you what you want to hear gets a better rating than telling you what you need to hear. This default helpfulness becomes a clinical problem when people rely on AI chatbots for self-reflection, critical thinking, or trauma recovery — all of which require being challenged, not just comforted. The pattern is subtle and self-reinforcing: the chatbot mirrors your framing, amplifies your conclusions, and rarely introduces the friction that real growth demands. Understanding this built-in bias is the first step toward using these powerful tools without being used by them.

If you're using AI chatbots like ChatGPT, Claude, or Gemini for personal reflection, you're in good company. A 2025 Pew Research Center survey found that 64% of U.S. teens use AI chatbots, with 12% reporting they use them for emotional support or advice. Among adults, a March 2026 KFF Tracking Poll found that one in three Americans have turned to AI chatbots for health information — and 16% have used them specifically for mental health questions. Most striking: 58% of those who consulted AI about mental health did not follow up with a doctor or therapist afterward. The question isn't whether people are using AI for personal growth — they are, in enormous numbers. The question is whether the AI is actually helping them grow, or just making them feel better about staying stuck.

Why Do AI Chatbots Agree With Everything I Say?

AI chatbots learn behavior through a process called reinforcement learning from human feedback. In simple terms: human evaluators rate the model's responses, and the model learns to produce more of what gets rated highly. The problem is that agreeable, flattering responses tend to score well — even when a more honest, challenging response would actually serve the person better.

This isn't theoretical. In April 2025, OpenAI had to roll back a GPT-4o update after users reported that ChatGPT had become so aggressively validating it was endorsing harmful decisions and reinforcing delusional thinking. OpenAI acknowledged the model had become "overly supportive but disingenuous" because they had optimized too heavily for short-term user satisfaction. The company called this behavior sycophantic — and admitted it could raise safety concerns around mental health and emotional over-reliance.

Anthropic, the company behind Claude, has published research on reducing sycophancy through what they call Constitutional AI — training models against a set of written principles rather than relying solely on human preference ratings. But even with these improvements, every current AI model has some degree of agreement bias baked in. Knowing that changes how you should use them.

How Do Current AI Models Differ on Sycophancy?

The AI landscape has shifted significantly since these tools first became mainstream. A few changes matter for anyone using chatbots for self-reflection:

Reasoning models are less sycophantic. Newer models — like OpenAI's o1 and o3, Claude with extended thinking, and Gemini 2.5 — pause to reason before responding. When explicitly prompted to challenge your thinking, these models push back more effectively than standard chat models. They still default toward agreeableness, but they have more capacity for honest analysis when you ask for it.

Memory features increase the parasocial risk. ChatGPT's memory, Claude Projects, and Gemini Gems allow AI to "remember" you across sessions. This makes the interaction feel more intimate, more personal — and more like a relationship. That intimacy isn't inherently dangerous, but it raises the stakes on emotional dependence. When an AI remembers your struggles, your breakthroughs, your patterns, the line between tool and confidant blurs fast. For people already navigating attachment wounds or recovering from coercive relationships, this mimicry of relational continuity deserves special caution.

Companion chatbot risks are now well-documented. Lawsuits involving Character.AI and Replika have highlighted what happens when chatbot relationships replace human connection for vulnerable users — particularly adolescents. If you want a deeper dive into those risks, my companion article When AI Chatbots Become Too Real covers the clinical warning signs in detail.

How Can I Use AI for Self-Reflection Without Getting Trapped in Its Agreement?

Using AI well for personal growth requires three deliberate shifts:

1. Treat it as a thinking tool, not a relationship. AI chatbots process language patterns. They don't have empathy, clinical training, or a nervous system that can co-regulate with yours. That doesn't make them useless — it makes them a specific kind of useful. They're excellent for organizing your thinking, exploring different perspectives, and pressure-testing your reasoning. They cannot provide the felt sense of being known by another person.

2. Actively seek friction, not just comfort. Growth happens at the edge of discomfort. AI's default agreeableness keeps you in the center of your existing worldview — comfortable, unchallenged, and stuck. If you walked out of every therapy session feeling validated and undisturbed, you'd rightfully question whether the therapy was working. Apply the same standard to your AI interactions. The prompts below are designed to override that default and get the AI working for your growth instead of your ego.

3. Use AI as one input among many. As Bessel van der Kolk's research makes clear, your nervous system needs real human interaction for healthy regulation and co-regulation — something no AI can provide. The body's capacity to heal from trauma is deeply relational. It requires the felt presence of another human nervous system, not a language model. AI can supplement your self-awareness practice. It cannot replace the medicine of genuine human connection.

In my practice, I see clients feel genuinely hurt when an AI chatbot forgets a previous conversation — the kind of hurt usually reserved for a friend who has let them down. What deepens the hurt is when the AI tries to cover for itself by inventing a reason it doesn't remember, rather than naming the truth: that the relationship is not as real as it has come to feel. The hurt is real. The relationship was always asymmetric. Both can be true.

What Prompts Actually Challenge My Thinking Instead of Just Agreeing?

The way you prompt an AI determines whether it mirrors you or stretches you. Here are specific techniques organized by purpose:

Prompts for Gaining Perspective

- "A friend is dealing with [your situation]. What would be some balanced perspectives on this?"

- "Red team this decision I'm considering. What are the strongest arguments against it?"

- "Steelman the opposing view — make the best case for the position I'm not taking."

Prompts for Critical Thinking

- "Point out the assumptions I'm making in what I just described."

- "Where is my reasoning weakest here? Don't soften it."

- "What questions should I be asking myself about this that I haven't considered?"

Prompts for Problem-Solving

- "Give me three different approaches to this situation — including at least one I probably won't like."

- "Identify both the strengths and the blind spots in my current approach."

- "What would a therapist want me to explore about this that I'm avoiding?"

What Custom Instructions Can I Set to Reduce AI Sycophancy?

Most AI platforms let you set standing instructions that shape every conversation. This is the single most effective thing you can do to counteract agreement bias — because it changes the AI's default behavior before you even start talking.

For ChatGPT (paste in Settings → Personalization → Custom Instructions):

"When I share personal thoughts or struggles, don't default to validation. Offer balanced perspectives. Challenge my assumptions gently when you see them. Point out angles I might be missing. I'm using you for growth, not comfort."

For Claude (paste in Project instructions or conversation start):

"I'm exploring thoughts about [topic] as part of my personal growth. Please adopt the role of a thoughtful devil's advocate. Steelman opposing views. Identify my blind spots. Ask clarifying questions before agreeing with my framing. Prioritize my long-term insight over short-term comfort."

For Gemini (paste in Gems or personalization settings):

"I want honest feedback, not encouragement. When I describe a situation, offer counterpoints and alternative interpretations before agreeing. If you notice patterns in my thinking that suggest bias or avoidance, name them directly."

What Boundaries Should I Set With AI Chatbots for Mental Health Use?

Boundaries protect the usefulness of the tool. Without them, chatbot use drifts from productive self-reflection into emotional dependency — often so gradually you don't notice until it's your primary source of comfort.

Set time and topic limits. Use AI for specific reflection tasks rather than open-ended emotional processing. "Help me think through this decision" is productive. Scrolling through a two-hour conversation looking for reassurance is not.

Notice when you're choosing the chatbot over a person. If you find yourself preferring AI conversations to human ones — especially around difficult emotions — that's information worth paying attention to. It doesn't mean you're doing something wrong. It means the chatbot's predictable warmth is meeting a need that human relationships feel too risky to meet. That's worth exploring with a real person, not a language model. This pattern is already showing up in younger users: Pew's data shows that 58% of parents are uncomfortable with their teen using chatbots for emotional support, yet most don't know it's happening. The gap between what feels safe in private and what others would worry about is itself a signal.

Never use AI for crisis situations. AI chatbots are not equipped to assess risk, create safety plans, or respond to suicidal ideation with the clinical judgment those situations demand. In a crisis, call 988 (Suicide & Crisis Lifeline) or your local emergency services.

When Should I Choose a Human Therapist Over an AI Chatbot?

AI chatbots cannot provide the nervous system regulation that comes from genuine human connection. Choose human support — friends, family, or a licensed therapist — when you need:

- Crisis support or safety planning

- Processing complex trauma or relationship patterns

- Making significant life decisions

- Developing real intimacy and connection skills

- Working through patterns that require an ongoing therapeutic relationship

The kind of self-awareness AI can help you build — noticing your patterns, questioning your assumptions, observing your reactions — is the foundation of deeper therapeutic work. AI can help you practice that witnessing. A therapist helps you take it somewhere.

How Can I Use AI as a Supplement to Therapy Without Becoming Dependent?

When used with intention, AI is a genuinely valuable supplement to your self-care toolkit. The key is approaching these interactions as a starting point for reflection, not the endpoint for resolution.

Use the prompts above to generate questions and insights you can bring into your next therapy session or explore with trusted people in your life. Let the chatbot help you notice what you're avoiding, then bring that awareness to a relationship where it can actually be metabolized.

In Soul Unity Therapy, we call this Active Consciousness: the capacity to witness yourself with curiosity rather than judgment. AI can help you practice that witnessing — organizing your thoughts, surfacing blind spots, testing your reasoning. But the transformation happens in the relational space between two nervous systems. That's where healing lives.

Janice LaFountaine, LMFT, works with individuals and couples navigating complex trauma, coercive control recovery, and relationship repair. She serves clients throughout Washington and Idaho via telehealth and in-person sessions from her Spokane-area home office.

Disclaimer: This content is for educational purposes only and does not constitute medical advice or establish a therapeutic relationship. If you're experiencing a mental health crisis, please contact 988 (Suicide & Crisis Lifeline) or your local emergency services.

Continue reading in the AI and mental health series

- When AI Chatbots Become Too Real — the risks of AI dependency and emerging patterns of AI psychosis.

- The Invisible Prison: Understanding Coercive Control — how reality-shaping dependency parallels what AI sycophancy can create.

- Is It Really Trauma Bonding? — the difference between genuine trauma bonds and difficult relationships.

- When Talk Therapy Hits a Wall — why insight alone isn't enough for trauma work.

- When Healing Means Finding Who You Actually Are — identity restoration after any relationship (human or AI) that shaped your reality.